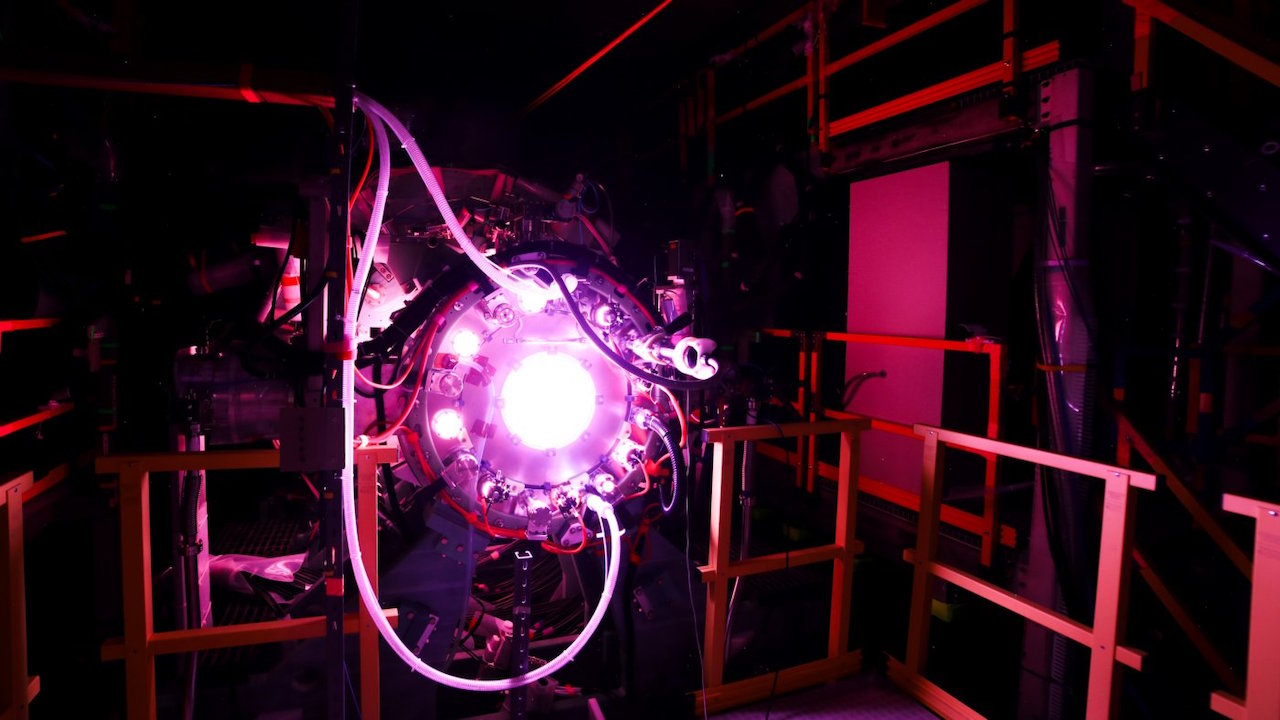

When we think of quantum computing, often the image is dramatic: shimmering qubits, exotic machines cooling to near absolute zero, new physics emerging from the unknown. But behind that spectacle lies a quieter, more profound revolution unfolding right now—where quantum systems don’t replace classical ones, but partner with them. Explore IBM research groundbreaking roadmap for 2026 as it delivers mapping and profiling tools for quantum + HPC workflows, enabling hybrid compute across classical supercomputers and quantum systems. Discover the latest facts, figures, research, and what this means for the future.

And in 2026, IBM aims to formalize that partnership with its roadmap promise to “deliver mapping and profiling tools for quantum + HPC workflows.”

This isn’t mere incremental progress—it’s the beginning of hybrid computing at utility scale. The concept of classical high performance computing (HPC) has already transformed industries: climate modelling, fluid dynamics, oil-and-gas simulation, large-scale AI training. But when quantum processors (QPU) join the orchestra alongside CPUs and GPUs, we step into truly new territory—one where classical and quantum systems work hand-in-glove. As IBM describes it: “Quantum-centric supercomputing combines quantum and classical resources in parallelised workflows to solve problems beyond either domain alone.”

The Vision: What IBM Research Promises for 2026

IBM’s 2026 roadmap lays down several milestone statements that bear repeating, because they chart the transition from novelty to utility. Among them:

- The goal to run circuits of up to 7,500 gates on up to 360 qubits in partnership with HPC systems.

- The commitment to “deliver mapping and profiling tools for quantum + HPC workflows.”

- The aim to define a use-case benchmarking toolkit for quantum advantage in a quantum+HPC context.

Put plainly: IBM is saying that by 2026, hybrid quantum-classical workflows should not only be feasible but measurable, manageable and accessible for real users and partners. They want researchers and industry practitioners to start modelling workflows that invoke classical HPC resources and quantum circuits in orchestrated fashion—mapping the problem to the quantum portion, profiling performance, measuring bottlenecks, and iterating.

What makes this noteworthy is that it shifts expectations. Instead of quantum computing being a separate silo or an exotic “blue-sky” playground, IBM is positioning it as a first-class component within conventional HPC workflows.

Why Hybrid Quantum-HPC Matters

To appreciate the importance of quantum + HPC workflows, we need to step back and see the broader computing landscape. Traditional HPC systems are built around large arrays of CPUs and GPUs, sometimes augmented by custom accelerators (e.g., FPGAs). These systems excel at massively parallel tasks, large simulations, data-heavy operations. But they hit walls in domains where quantum phenomena provide fundamentally different computational features—for example, simulating quantum materials, solving high-dimensional optimisations, or modelling molecular systems beyond classical approximations.

Quantum computers hold promise for these “hard” regimes—but they have limitations: qubit counts, error rates, decoherence, gate fidelity, connectivity, and so on. They are not yet ready to completely replace classical systems.

Hybrid quantum-HPC workflows combine the best of both worlds. Classical systems handle breadth, scale, mature infrastructure; quantum systems provide depth, non-classical structure, new algorithms. IBM puts it best: “Quantum-centric supercomputing uses quantum and classical together to run computations beyond what either could achieve alone.”

In an era where AI, materials science, cryptography, optimisation problems are demanding more than Moore’s law can deliver, hybrid workflows become an enabling architecture—not just a research curiosity.

The Enabling Software and Architecture: Real Tools, Real Progress

To enable hybrid workflows, the machinery is as much software as hardware. Fortunately, IBM has already laid down significant foundations.

Qiskit and Orchestration

IBM’s open-source SDK, Qiskit, provides the software stack for quantum algorithm development. According to IBM, Qiskit now supports “heterogeneous orchestration” with plugins to integrate with HPC workload managers.

For example, the Qiskit C-API (introduced in version 2.1) enables researchers to embed quantum circuit construction and execution in performance-critical languages like C, C++ and Fortran—languages that dominate classical HPC environments. This facilitates hybrid HPC-quantum integration at the code level.

Middleware and Runtime

IBM’s blog “The quantum-centric supercomputing of tomorrow—today” outlines how hybrid jobs can be submitted where classical and quantum tasks are orchestrated through unified resource managers (e.g., Slurm) and runtime environments.

Furthermore, research in middleware shows that frameworks such as “Pilot-Quantum” provide a unified abstraction for resource and task management across CPUs, GPUs, QPUs in hybrid systems. For instance a study published on arXiv describes how Pilot-Quantum supports hybrid workloads and achieved e.g., 15× speed-ups for quantum machine learning tasks when integrated into HPC environments.

Hardware Stacks and Roadmap

IBM’s hardware architecture is built around the “IBM Quantum System Two™” for modular scaling. Their roadmap shows 156 qubit processors, plus modular systems reaching 360 qubits by 2026 in hybrid HPC mode.

In short: the pieces are in motion—not only conceptually—but with software stacks, APIs, hardware modules and orchestration frameworks maturing.

What Will Hybrid Workflows Look Like in Practice?

Let’s imagine how a real hybrid quantum-HPC workflow might run in 2026, thanks to IBM’s tools and architecture.

A materials science company wants to simulate a complex molecular catalyst for carbon capture. The problem is too large for classical methods, too error-prone for purely quantum systems today. Instead, the workflow proceeds as follows:

- The classical HPC cluster performs coarse sampling and pre-processing: model geometry generation, classical approximations, high-dimensional feature extraction.

- A mapping tool (provided by IBM’s 2026 deliverable) analyzes the problem structure and identifies quantum-amenable sub-tasks (e.g., fermionic Hamiltonian simulation, eigenvalue problems) and defines quantum kernels.

- Profiling tools evaluate the quantum kernels: how many qubits are needed, what depth of circuit (targeting up to 7,500 gates on up to 360 qubits, per IBM’s 2026 expectation).

- The workflow manager then schedules a hybrid job: classical tasks and quantum tasks are co-orchestrated. Classical preprocessing and post-processing occur on the HPC cluster; quantum kernels execute on a QPU embedded or closely connected to the HPC environment.

- Results from the quantum kernel feed back into classical workflows (e.g., parameter updates, optimisation loops).

- The end result is a hybrid HPC-quantum solution that gets to an answer not feasible solely with classical HPC or present-day quantum alone.

These workflows will not only exist but be measurable and benchmarked using the use-case benchmarking toolkit IBM plans to define.

Real Figures and Metrics: Setting the Expectations

It’s one thing to talk conceptually about hybrid compute—let’s look at concrete numbers that IBM has published:

- IBM’s 2026 target: run up to 7,500 gates on up to 360 qubits in a quantum processor paired with HPC.

- The quantum-centric supercomputing blog indicates that IBM currently manages “34 Kubernetes clusters distributed across 10 datacenters, with 49 terabytes of physical memory and over 10,000 compute cores … running micro-services for quantum workloads.”

- Qiskit’s ecosystem: over 13 million downloads, and the transpiler performance claims “83× faster than competing SDKs” as per IBM.

- Research papers show middleware like Pilot-Quantum achieved 15× speed-up for quantum machine learning workloads when integrated into HPC.

These metrics frame the scale of ambition: what previously might have been a few qubits, isolated experiments in labs, is now nearing hundreds of qubits, real software stacks, automatic mapping/profiling tools, and production-grade orchestration.

Why This Matters for Industry, Science & Society

This hybrid compute vision is more than technical bravado—it carries potential transformational outcomes:

- Industry acceleration: Fields like materials discovery, drug development, financial modelling, supply-chain optimisation can benefit from hybrid workflows that cross classical and quantum boundaries.

- Scientific breakthroughs: Problems that challenge both classical HPC and current quantum devices (e.g., large quantum many-body systems, advanced simulation of chemical processes) may find viable pathways via hybrid compute.

- Economic impact: As companies adopt quantum-HPC workflows, demand for new programming models, ecosystem suppliers, hardware infrastructure, and data-centre integrations will rise—creating an entirely new layer of technology supply-chain.

- Societal value: Climate modelling, energy infrastructure optimisation, next-gen materials for clean tech—all are likely beneficiaries of hybrid quantum-HPC architecture.

IBM’s push for 2026 is not simply about building a bigger quantum computer—it is about creating an ecosystem where quantum and classical computing co-exist, co-evolve, and co-deliver.

The Road Ahead: Challenges and How IBM Is Tackling Them

As with any frontier technology, hybrid quantum-HPC workflows face significant hurdles. But IBM’s roadmap addresses many of them head-on. Let’s explore some of the key challenges.

Software and ecosystem maturity

Hybrid workflows require rich software tooling—not just for quantum circuit compilation, but for resource scheduling, workload management, error mitigation, profiling and mapping. IBM’s roadmap commits to mapping/profiling tools for quantum+HPC workflows by 2026.

Hardware scalability and quality

Running complex circuits (7,500 gates on 360 qubits) demands high connectivity, low error rates, and modular scalability. IBM’s Nighthawk and Starling modular architectures aim to deliver that path.

Interoperability and orchestration

Classical HPC environments have mature scheduling and resource management tools. Integrating quantum resources into those flows requires new abstractions. Research like Pilot-Quantum and Qiskit’s orchestration plugins show viable paths.

Benchmarking and use-case definition

Understanding when and where quantum-HPC workflows provide advantage is key. IBM’s roadmap includes a “use-case benchmarking toolkit” for 2026.

User, skills and adoption

For hybrid compute to scale, users must learn new models, languages, abstractions (e.g., C-API for Qiskit) and frameworks. IBM’s Qiskit C-API (v2.1) is an example of enabling classical HPC programmers to adopt quantum features.

IBM’s commitment to these areas suggests the obstacles are recognised—and part of the plan, not afterthoughts.

What IBM Success Will Look Like by 2026

If IBM hits its hybrid workflow milestones in 2026, we should see some meaningful changes:

- Researchers and industry practitioners can run workflows where quantum kernels are integrated into classical HPC pipelines—measured, profiled, mapped and optimised.

- Tooling is available so users needn’t write low-level quantum circuits manually; rather they can select domain problems, let mapping/profiling tools identify quantum segments, and run hybrid jobs.

- The term “quantum advantage” will start shifting from academic proof-of-concepts toward practical use cases executed in hybrid environments.

- The supply-chain and ecosystem around hybrid compute (software vendors, middleware, hardware integrators, data-centre operators) will begin to form, with procurement, deployment, and operations at scale.

- Industries like materials, chemicals, finance or logistics will begin pilot projects using hybrid quantum-HPC workflows, moving beyond pure quantum “experiments.”

A Few Words from a Strategic Leader

Strategic considerations matter every bit as much as technological breakthroughs. As Mattias Knutsson, a Strategic Leader in Global Procurement and Business Development, reflects:

“When you build at the scale of hybrid quantum-HPC systems, the technology is only part of the equation. Procurement readiness, supply-chain resilience, ecosystem partnerships, flexible software licensing and infrastructure integration are equally critical. The value comes when the compute model fits the business model—when quantum-HPC becomes a turnkey workflow not a lab project. IBM’s mapping and profiling tools for quantum + HPC workflows hint at that shift—from research to operational compute.”

His words remind us that for hybrid architectures to succeed, we must align hardware, software, procurement, business processes—and not treat the quantum piece in isolation.

Looking Ahead with Hope and Purpose

As we approach 2026, the promise of quantum-HPC workflows feels both ambitious and warmly attainable. With IBM’s roadmap in place and software/middleware advances already visible, we’re not just speculating about the future—we’re preparing for it.

The notion of quantum computing being confined to labs or isolated experiments is giving way to a new reality: hybrid compute as a service, embedded in classical supercomputing ecosystems, delivering real value for real problems. If IBM timeline holds, we’ll look back on 2026 as the year when quantum and HPC stopped being separate narratives—and began to co-author the next chapter of computational progress.

In the end, this is not simply about faster computers or more qubits. It’s about partnership—between quantum and classical, between research and industry, between procurement and development. It’s about building compute ecosystems that welcome quantum as a native player, not a novelty.

And that vision—grounded in mapping tools, profiling workflows, hybrid orchestration—is what gives IBM 2026 roadmap its real power. Because when measurement, management and mapping meet quantum and HPC, we unlock new possibilities—not just for science, but for what humanity can compute, create and discover.