Every time you type a prompt into a large language model (LLM)—whether it’s ChatGPT, Claude, or Gemini—you’re tapping into a computing system so massive it rivals the energy footprint of entire nations. Behind the friendly conversational tone lies an invisible cost: the sheer power consumption of training and running these models. AI models are consuming massive amounts of energy. Could quantum algorithms offer a sustainable path forward? Explore how Green AI meets quantum computing.

In 2023, researchers from the University of Massachusetts Amherst estimated that training a single large AI model could emit up to 284,000 kilograms of CO₂—equivalent to five cars running for their entire lifetimes. By 2025, with LLMs doubling in size every 6–12 months, the energy requirements are only accelerating.

And yet, the demand is relentless. Governments, companies, and individuals all want bigger, smarter, more capable AI systems. The paradox is clear: how do we make AI sustainable at scale?

Enter quantum algorithms—the promise of harnessing quantum computing not only for speed, but for efficiency. Could this new frontier in computation hold the key to making AI greener?

This blog explores the intersection of Green AI and quantum computing, examining how quantum algorithms might reduce LLM power consumption, what the technology’s current limits are, how it compares to other sustainability strategies, and how it could reshape the global balance of AI innovation.

The Energy Problem of AI at Scale

Training a large AI model is not just about clever code. It requires:

- Billions of parameters: GPT-4 reportedly has over 1 trillion parameters. Training such models means running endless iterations of matrix multiplications.

- Data centers on overdrive: Microsoft, Google, and Amazon are building AI-specific superclusters that require hundreds of megawatts per site.

- Cooling costs: Data centers are often water-cooled. In 2023, The Verge reported that training GPT-3 consumed 700,000 liters of clean freshwater just for cooling.

Estimates suggest that by 2030, AI alone could account for 3–5% of global electricity demand if current growth rates continue.

This is where the “Green AI” movement emerges—an effort to optimize algorithms, hardware, and policies so that AI innovation does not come at the expense of the climate.

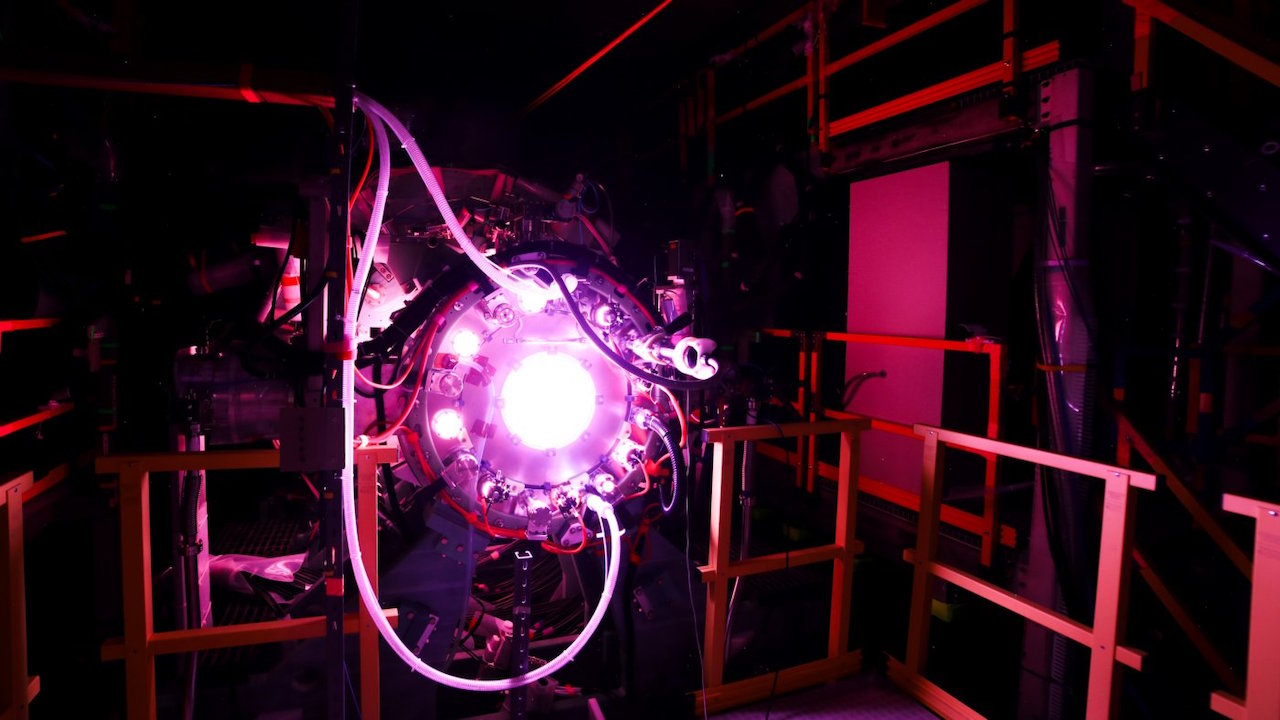

Why Quantum Computing Enters the Conversation

Quantum computing is often associated with abstract physics and cryptography. But its real promise lies in solving complex computational problems with exponentially fewer steps.

Traditional AI models rely on linear algebra: matrix multiplications, tensor operations, and gradient calculations. Quantum algorithms can potentially handle these operations using quantum superposition and entanglement, reducing the number of computations dramatically.

For example:

- HHL Algorithm (Harrow, Hassidim, Lloyd, 2009): A quantum algorithm that can solve systems of linear equations exponentially faster than classical methods. Since LLMs are essentially giant systems of linear equations, this has direct implications.

- Quantum Kernel Methods: Instead of brute-force searching through massive datasets, quantum-enhanced kernels may classify data more efficiently.

- Quantum-inspired algorithms: Even before scalable quantum computers arrive, hybrid algorithms inspired by quantum techniques are helping optimize neural network training.

The big idea: if quantum algorithms can cut down the number of computations by orders of magnitude, they could also slash energy consumption.

The Promise: How Quantum Could Green AI

The vision is tantalizing. Imagine:

- Training GPT-5 on a quantum-accelerated backend that requires half the power of current supercomputers.

- Running inference (chat responses, translations, recommendations) on quantum-enhanced circuits that drastically reduce GPU usage.

- Using quantum optimization algorithms to streamline supply chains for AI hardware itself, from chip design to logistics.

A 2024 report by Boston Consulting Group projected that quantum computing could reduce AI training energy by 20–40% by 2035, depending on algorithmic breakthroughs and hardware scaling.

While these percentages may sound modest, at global scale, they represent gigawatts of saved energy—the equivalent of removing millions of cars from the road.

The Roadblocks: Why We’re Not There Yet

Of course, there’s a gap between theory and practice.

- Hardware limits: As of 2025, IBM’s Condor processor boasts 1,121 qubits, while Google’s Sycamore 2 is pushing into the thousands. But fault-tolerance and error correction remain enormous challenges. To run AI-scale problems, we’d need millions of stable qubits.

- Algorithm maturity: While promising, quantum algorithms for AI are still experimental. Implementing HHL or quantum machine learning on real systems often fails due to noise.

- Energy of quantum itself: Building and running quantum computers requires cryogenic cooling and specialized infrastructure. Today, the energy per qubit is still higher than a GPU cycle.

So, while quantum has long-term promise, short-term Green AI requires classical solutions—like more efficient GPUs (e.g., NVIDIA’s H100), optimized training (e.g., sparse models), and renewable-powered data centers.

Hybrid Models: The Bridge Between Now and the Quantum Future

The most realistic near-term path is hybrid computing. Instead of replacing classical AI entirely, quantum algorithms can handle specific bottlenecks:

- Optimization tasks in training (e.g., adjusting learning rates or fine-tuning weights).

- Sampling tasks in generative models (quantum random sampling can reduce overhead).

- Search and recommendation engines where high-dimensional vector searches dominate.

Microsoft, through its Azure Quantum initiative, is already experimenting with hybrid pipelines. Google’s Quantum AI division is working on quantum-inspired tensor processing units. These efforts reflect a shift toward incremental, not revolutionary, gains.

Quantum vs. Other Paths to Green AI

The push toward sustainable AI is not a single-track journey. Quantum algorithms are compelling, but they are only one piece of a diverse landscape of strategies. To understand why quantum matters, it helps to compare it with other efforts currently underway.

Chip-Level Efficiency

NVIDIA, AMD, and Google are all racing to make AI chips more efficient. NVIDIA’s H100 Tensor Core GPUs are estimated to be 3x more energy efficient per parameter trained compared to the A100 chips just a few years earlier. Specialized accelerators like Google’s TPUs or startups like Cerebras (with wafer-scale processors) are pushing performance-per-watt improvements even further.

These classical innovations will keep improving, but they follow incremental, not exponential gains. Moore’s Law may be slowing, but architectural innovations help squeeze out more efficiency each generation.

Model Compression and Smarter Architectures

Green AI is also about making the models themselves smaller and smarter:

- Sparse models activate only parts of a neural network during inference, reducing compute needs.

- Distillation techniques train smaller models to mimic large ones, cutting power requirements.

- Mixture-of-Experts architectures allow models to selectively use only a subset of parameters for each task.

For example, OpenAI’s shift toward mixture-of-experts in GPT-4’s successors was partly about efficiency as well as performance. These techniques can reduce inference costs by 50% or more.

Renewable-Powered Data Centers

The carbon footprint of AI is not only about how much energy it consumes, but where that energy comes from. Google, Microsoft, and Amazon have pledged to run their data centers on 100% renewable energy by 2030. In regions like Scandinavia, some data centers are already powered by hydropower and cooled by natural Arctic air.

However, renewable alignment is uneven. In water-stressed areas, data centers have come under scrutiny for diverting freshwater resources. And renewable energy, while clean, doesn’t automatically reduce the absolute demand for electricity.

Why Quantum Is Different

Quantum computing enters this landscape not as a replacement, but as a potential multiplier. Unlike chip upgrades or compression techniques that improve efficiency linearly, quantum algorithms could—if realized—offer exponential improvements in specific bottlenecks such as linear algebra operations or optimization tasks.

Think of it this way:

- Chip improvements = incremental savings each generation.

- Model compression = smarter use of current resources.

- Renewables = greener inputs for the same high demand.

- Quantum algorithms = entirely new math that could slash the demand itself.

In this sense, quantum is not just another efficiency hack—it represents a paradigm shift. While hardware scaling and energy sourcing will remain essential, quantum offers a way to fundamentally change the rules of computation.

The Geopolitics of Quantum Green AI

The pursuit of Green AI through quantum algorithms isn’t just scientific—it’s geopolitical.

- United States: Billions are flowing through the CHIPS and Science Act, with quantum R&D as a major pillar. The U.S. sees quantum not just as a tool for AI, but as a bulwark against China’s tech rise.

- China: Investing heavily in quantum supremacy, with labs in Hefei pushing forward on both AI and quantum integration. China has also emphasized REE independence, knowing rare earths are critical for quantum chips and superconductors.

- Europe & Japan: Positioning themselves as ethical leaders in Green AI, with a focus on sustainability, energy reduction, and cooperative frameworks.

- Smaller players: Countries like Canada and Singapore are carving niches in quantum software and hybrid systems.

If quantum AI enables massive energy savings, the countries that lead in this field will hold both technological and environmental influence—a new kind of “green superpower.”

Smaller Players in the AI Ecosystem

While the giants—Google, Microsoft, OpenAI, and Baidu—are racing ahead, smaller players may actually benefit disproportionately from quantum breakthroughs.

Why? Because energy costs are a major barrier to entry. Training an LLM can cost tens of millions of dollars in compute and electricity. If quantum algorithms reduce that threshold, smaller labs and startups might re-enter the race.

This could democratize AI research, reversing a trend where only trillion-dollar companies can train frontier models.

Looking Ahead: Timelines and Possibilities

When might we actually see quantum-powered, energy-efficient AI? Analysts disagree.

- Optimistic view: By the early 2030s, practical quantum accelerators could plug into cloud platforms, offering measurable gains.

- Skeptical view: Fault-tolerant, scalable quantum AI may remain decades away, making classical Green AI the only viable option for now.

- Middle ground: Hybrid systems and quantum-inspired algorithms will deliver incremental but valuable improvements by 2027–2030.

What is certain is that without intervention, AI’s carbon footprint will balloon unsustainably. Whether through quantum, better classical chips, or both, the industry must find ways to align progress with sustainability.

Conclusion:

The story of AI is no longer just about intelligence—it’s about sustainability. As LLMs grow larger and more capable, their power demands grow too, threatening to make intelligence itself unsustainable.

Quantum algorithms offer a glimpse of a future where intelligence is not only powerful, but efficient. While the road is long and the challenges steep, the pursuit of Green AI is not optional—it is existential.

The reality is that we will likely need all of the above: better chips, smarter models, renewable energy, and—eventually—quantum breakthroughs. But the allure of quantum is that it speaks not just to today’s trade-offs, but to tomorrow’s possibilities.

As Mattias Knutsson, Strategic Leader in Global Procurement and Business Development, has put it: “Sustainability isn’t about one silver bullet—it’s about a portfolio of solutions. But quantum’s promise reminds us that sometimes, entire industries can be reshaped by a new approach to old problems.”

If we succeed, Green AI powered by quantum algorithms could become more than a sustainability initiative. It could mark the dawn of a new computing era—where intelligence grows, but the planet breathes easier.